- Home

- Services

- About

- News

- Contact

- Hollow knight pc dlc

- Multi skype launcher para android

- Descargar aplicacion pizap para android

- Lord of rings war in the north

- Best mac antivirus free trial

- Best math flash cards

- Make symboliclinker mac

- The riftbreaker cobalt

- Rl grime aurora rickyxsan

- Overflow anime imdb

- The print shop v23 jc

- Comparable dolce and gabanna light blue for men

- Htc sync manager windows 10 wont open

- Adobe lightroom for free mac 2018

- Sd card formatter for windows 10

- Watch apple keynote 2021

- Rpg game ps2 with twin yin and yang symbol

- Docker syslog driver

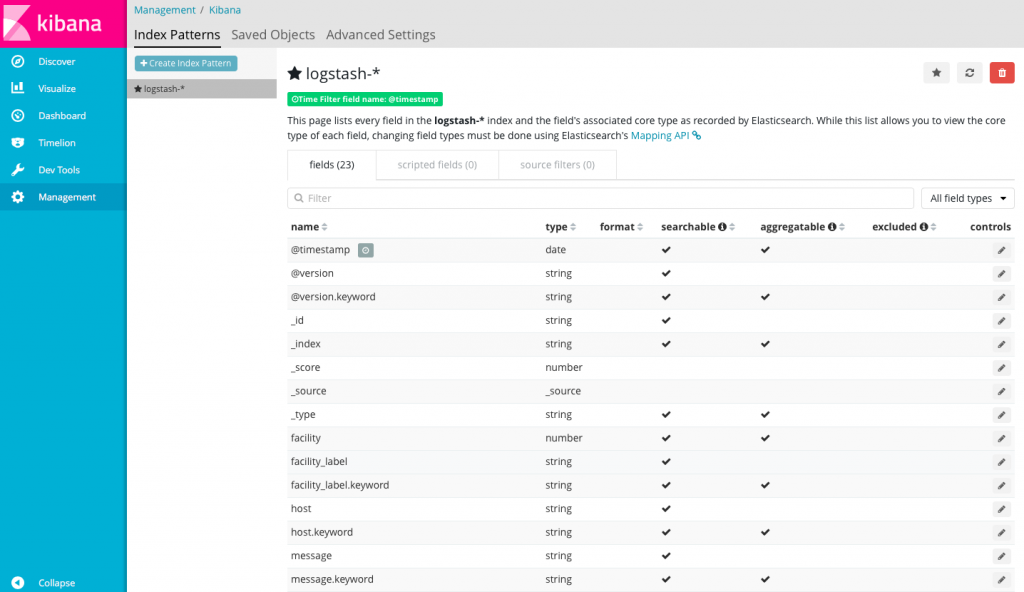

Kafka and Redis outputs are also supported. In our case, we are interested in Filebeat - a shipper intended to collect log data from files.įilebeat can process log files of various formats, do basic processing, and finally send data to Logstash or directly to Elasticsearch. For that purpose you can use lightweight data shippers called Beats. It, however, does not do any log collection. One of the most popular solutions out there, and with good reason - it was built by the same folks that make Elasticsearch. Popular log collection solutions Logstash and Beats Instead of using Filebeat, Logstash and Elasticsearch, we can simply use Fluent Bit + Elasticsearch. In order to make the log inspection job feasible, we need to collect the logs from all of these machines and send them to one central location.Īs discussed in uwsgi json logging, the idea behind using JSON logs is to simplify log collection stack. We deploy our microservices redundantly across a cluster of servers, and reading log files on servers directly is not an option. Reading raw log files on servers directly is great when working on a small number of servers, but it quickly becomes cumbersome. Properly formatted logs are, however, useless if you don't have a way of accessing them.

DOCKER SYSLOG DRIVER SERIES

In previous blogs from this series we discussed how we formatted uwsgi and Python logs using JSON.

- Home

- Services

- About

- News

- Contact

- Hollow knight pc dlc

- Multi skype launcher para android

- Descargar aplicacion pizap para android

- Lord of rings war in the north

- Best mac antivirus free trial

- Best math flash cards

- Make symboliclinker mac

- The riftbreaker cobalt

- Rl grime aurora rickyxsan

- Overflow anime imdb

- The print shop v23 jc

- Comparable dolce and gabanna light blue for men

- Htc sync manager windows 10 wont open

- Adobe lightroom for free mac 2018

- Sd card formatter for windows 10

- Watch apple keynote 2021

- Rpg game ps2 with twin yin and yang symbol

- Docker syslog driver